Introduction to a Telemetry Stack – Part 4

Welcome back for Part 4 of the Telemetry Stack! series. The action is steadily ramping up and sticking with The Fast and the Furious analogy. We actually have two guest stars (read: services) featured in this blog. However, I don’t want to spoil the surprise, so you’ll just have to read on!

In this post we will focus on advanced alerting techniques, such as the deadman and standard deviation. Then we will see how we can utilize a few Prometheus / Alertmanager integrations for alert and incident management.

Prerequisites

As this blog is part of a series, it builds on what we have explored in the previous posts. Knowledge of the telemetry stack TPG (Telegraf, Prometheus, Grafana) and the basics of metrics gathering and alerting is advised. These topics can all be explored or refreshed at the following links:

- Introduction to a Telemetry Stack – Part 1

- Introduction to a Telemetry Stack – Part 2

- Introduction to a Telemetry Stack – Part 3

Advanced Alerting Overview

As perfectly stated in Xenia’s previous post, an alert is “an alarm or other signal of danger” and must be a “meaningful signal of urgency and not constant white noise that is often ignored”. There are many philosophies to alerting, but we tend to take a page from the Google SRE Book, specifically Ch. 6 – Monitoring Distributed Systems, as a guiding principle.

The power of metrics, and subsequently alerts generated from those metrics, can often encourage an “alert on all the things” behavior. And while it looks great on a coverage spreadsheet, I have found that ultimately it leads to alert oversaturation and on-call exhaustion. As an observability team, we must find a way to design meaningful alert and response contracts with our stakeholders. And as painful as it might sound, not every alert is critical. An overuse of critical or emergency will only serve to create the Cry-Wolf Phenomenon. In other words, assign severity with an overabundance of caution.

We will be exploring just a few concepts here that can turbocharge your alerting, keep your team sane, and perhaps work towards that ever lofty goal of simplicity over complexity.

Deadman Switch

Ahh, the infamous deadman switch. A powerful technique with a grotesque name that you might have interacted with at some point in your daily life! If you have ever operated a lawn mower, ridden a jet ski, or taken the subway, you have interacted with a deadman switch. It’s essentially a safety feature to disable the machine if the human operator becomes disabled for whatever reason.

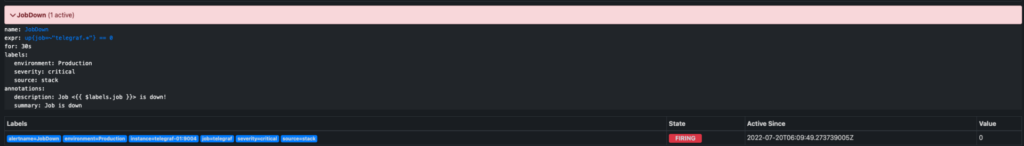

The deadman switch is typically used in monitoring systems to indicate that something went wrong in your observability pipeline. It could be Telegraf failing to gather, Prometheus failing to store, or Alertmanager going offline. It can be a form of self-monitoring or watching the watcher.

The concept is actually quite simple: send an alert when a metric we expect to be there isnt! To be clear, we are not interested in the value of the metric but rather whether it ceases to exist.

Let’s look at some examples.

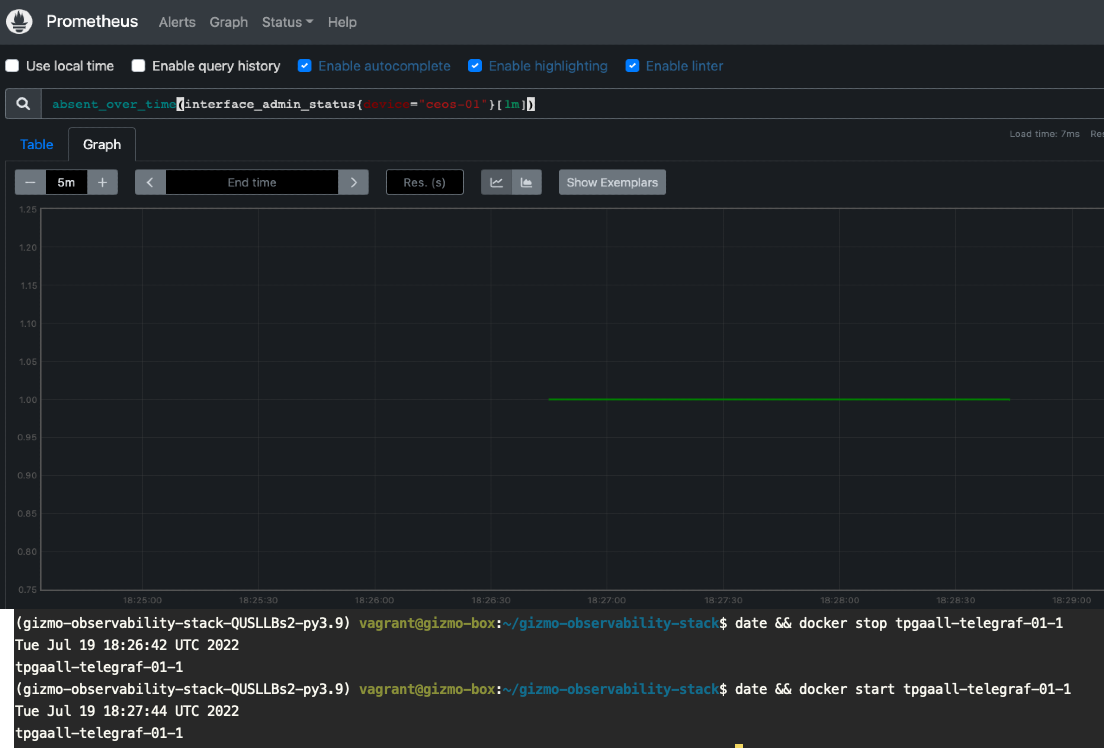

Here I will stop my Telegraf monitoring container, thus eliminating the gathering of interface metrics for device ceos-01. We will take advantage of the Absent() function, which returns an empty vector if the metric exists or a 1-element vector with the value of 1 if it does not exist. The screenshot shows the times and graph of our now missing metrics.

This could indicate that the device stopped responding to polling for numerous reasons, or if corresponded with an up{job="telegraf"} != 1, we could see whether the actual Telegraf poller stopped, which is exactly what happened.

Here is what an example alerting rule in Prometheus might look like utilizing Absent().

- name: Missing Device Metrics

rules:

- alert: MissingDeviceMetrics

expr: absent(interface_admin_status)

for: 2m

labels:

severity: high

source: telegraf

environment: Production

annotations:

summary: "Device metrics not being collected"

description: "Metrics for {{ $labels.device }} are missing. Check device or collector"

Now that you have seen an example of a deadman alert, can you think of other ways you would use this in a metrics pipeline? Remember, alert when something is missing!

Recording Rules

“Recording rules allow you to precompute frequently needed or computationally expensive expressions and save their result as a new set of time series. Querying the precomputed result will then often be much faster than executing the original expression every time it is needed.” – Prometheus Docs

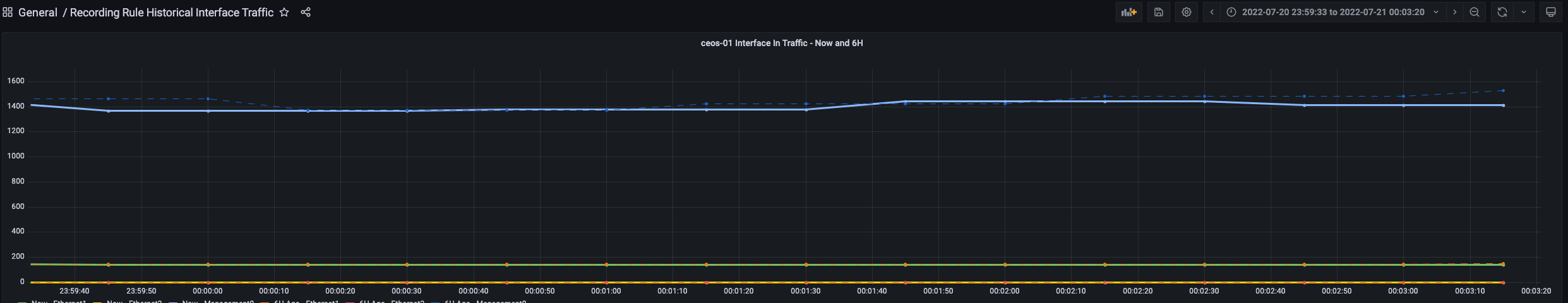

A great example of recording rules would be pre-calculating the rates of interface traffic over a period of time and then storing that as a separate metric for quick querying for alerting or graphing. In this example, I will set up a recording rule to gather the inbound interface traffic, but from six hours ago! This will allow us to graph historical on top of current, which could be done easily enough in this example with a query. However, think of the recording rule where you could compare traffic week by week or over the last month! This opens the doors for seasonality in your alerts.

Here is our recording rule. We take the rate of interface_in_octets, offset by 6H, and multiply by 8 to change our unit back to bps.

---

groups:

- name: recording

rules:

- record: interface:interface_in_octets:rate6h

expr: rate(interface_in_octets[5m] offset 6h) *8

It is difficult to see, but the dotted blue line is the mgmt0 traffic from six hours ago.

Standard Deviation and Anomaly Detection

Let’s pretend that we have been tasked with creating a rule to alert on network device CPU usage for multiple device vendors in our environment. One manufacturer might set the “normal” CPU load at anything less than 80%, while another might consider anything higher than 60% to be a problem. How can we solve this without creating tens if not hundreds of threshold rules and variations of these rules? Answer: Standard deviation.

“In statistics, the standard deviation is a measure of the amount of variation or dispersion of a set of values.[1] A low standard deviation indicates that the values tend to be close to the mean (also called the expected value) of the set, while a high standard deviation indicates that the values are spread out over a wider range.” – Wikipedia

Standard deviation is a fantastic method to implement to find potentially anomalous behavior and further free ourselves from rule and alert overload. Instead of very tedious and specific threshold alerts, we can rely on basic statistics that are more generic, thus encompassing potentially more uses.

Another thing to note here is that threshold alerting is a fantastic (sarcasm) way to create false positives that will ultimately have your team ignoring these alerts and suffering mightily during an on-call rotation. In other words, use them with caution!

What does this mean? If we record (remember those recording rules?) the long running average of what we are interested in, we can determine whether the current (now) values are outside of the average (mean) by a number of deviations, which is called the z-score.

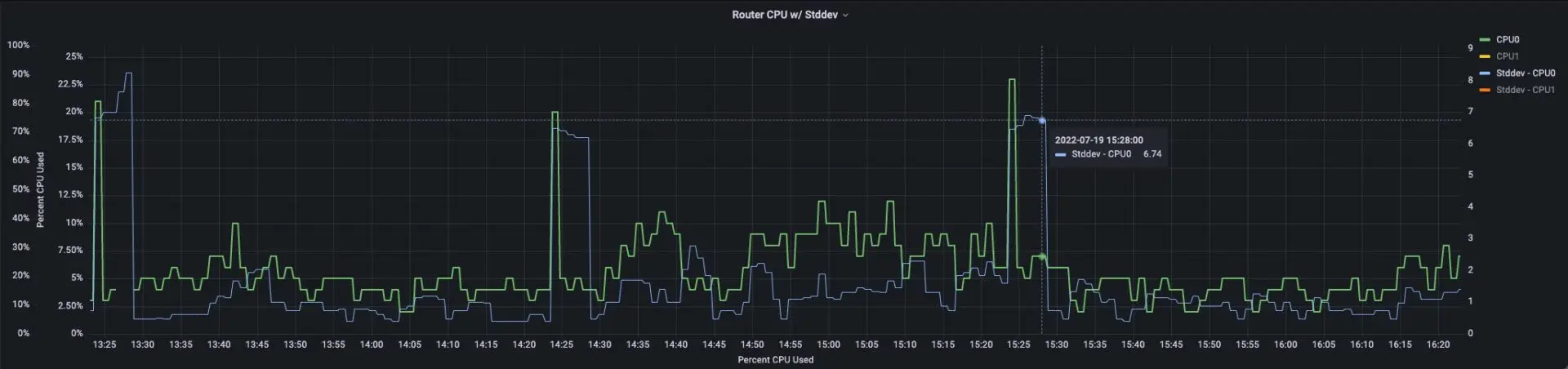

Here is an example of Percentage CPU used on a device (green line) and the plot of the standard deviation for a small average window (blue line). In this example, it is quite easy to see the CPU usage anomalies when the blue line exceeds ~3 on the right axis.

For z-score parameters, “Based on the statistical principles of normal distributions, we can assume that any value that falls outside of the range of roughly +3 to -3 is an anomaly.” – GitLab Anomaly Detection Using Prometheus

Therefore, we can create rules to record and alert when we have a z-score outside of +3/-3.

- record: cpu:cpu_used:avg1d

expr: avg_over_time(cpu_used[1d])

- record: cpu_stddev:cpu_used:stddev1d

expr: stddev_over_time(cpu_used[1d])

- name: CPU Used Anomaly

rules:

- alert: CpuUsedAnomaly

expr: abs((avg_over_time(cpu_used[5m]) - cpu:cpu_used:avg1d) / cpu_stddev:cpu_used:stddev1d) >= 3

for: 1m

labels:

severity: medium

source: telegraf

environment: Production

annotations:

summary: "Potential CPU Usage Anomaly Detected"

description: "A CPU usage anomaly possibly detected for {{ $labels.device }} on {{ $labels.name }}"

Prometheus and Alertmanager Integrations

Prometheus and its alerting component Alertmanager benefit from a great number of popular integrations that can be leveraged by organizations. What exactly are integrations? Let’s use the example of the popular organization messaging application, Slack. Alertmanager has an integration to send messages to a Slack workspace and channel with a highly customizable message format.

Here is a short list of Alertmanager integrations:

- opsgenie

- pagerduty

- slack

- VictorOps

- webhook

Prometheus itself has a great number of alert integrations available via its webhook receiver that can be explored here.

Another way that integrations can work with Alertmanager is if they are designed to utilize the Alertmanager API. One very useful tool for visualizing alerts comes to mind here: Karma. Karma is designed to visualize alerts using a very modern and unique method of grouping. You can take some action against the alerts, but it is probably best used as a visualization dashboard.

This brings us to Alerta. Let’s dive into Alerta, shall we?

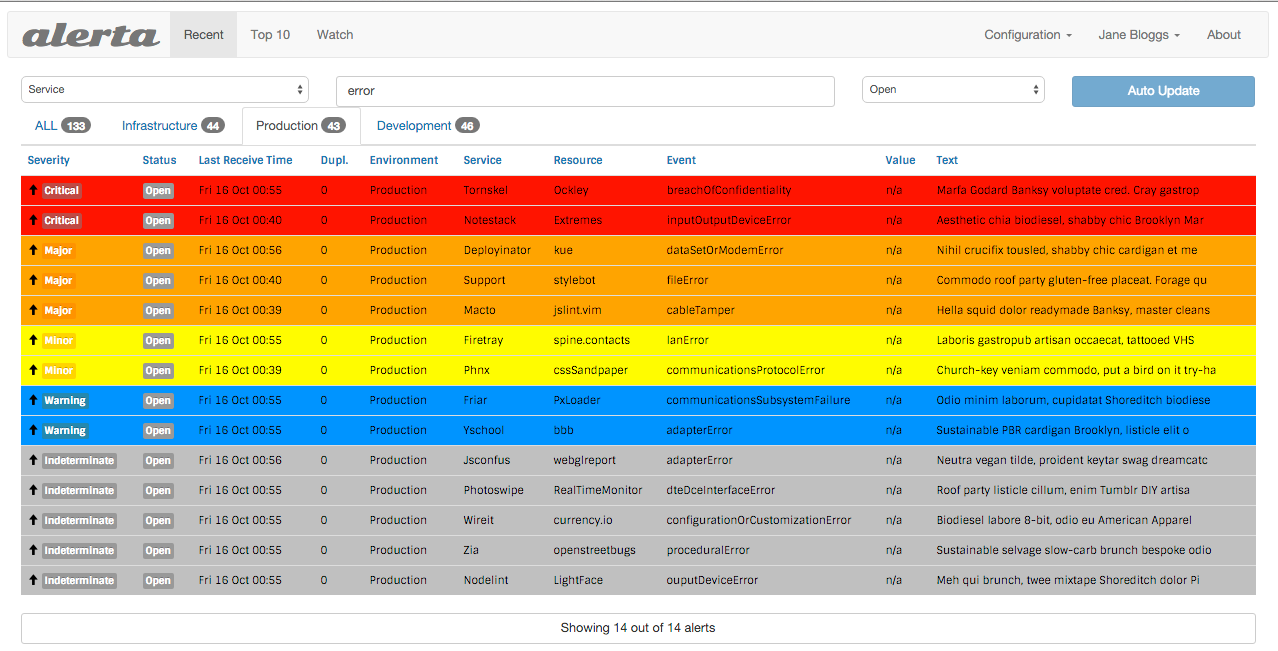

Alerta

Alerta is a fully integrated alerting dashboard that allows NOC/SRE/NRE users to perform actions against alerts, create notes, create blackout windows, and generate reports. It supports a multitude of authentication and authorization mechanisms, alert grouping and correlation, and a rich API.

Configuring Alerta is as simple as defining it with a webhook_receiver in Alertmanager. For example:

receivers:

- name: alerta

webhook_configs:

- send_resolved: true

url: http://alerta-01:8080/api/webhooks/prometheus

So, let’s see it in action with our own alerts!

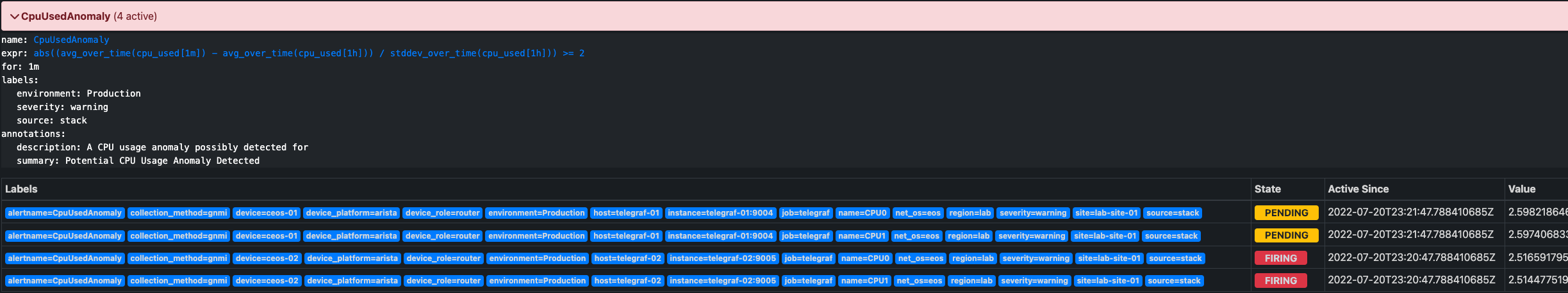

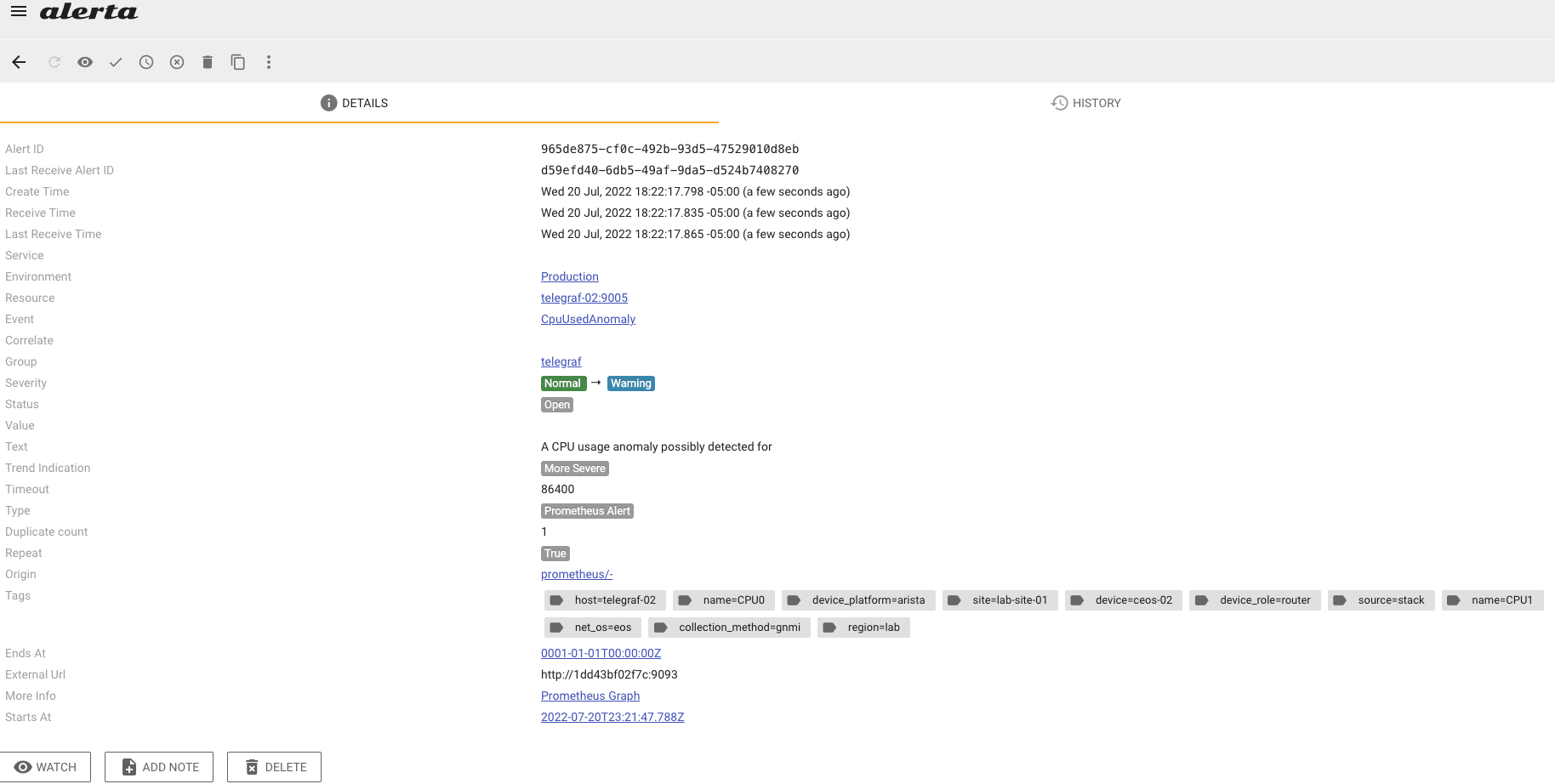

Here, I cause a CPU usage anomaly with a slightly lower threshold for the purpose of actually generating an alert easily. First, the alert is detected and sent to Alertmanager by Prometheus.

Then, Alerta routes the alert to Alerta based on the labels in the alert. Here, we see the alert list, and you can see the CPU Anomaly Alert.

Clicking the alert will display it in detail along with all of the label sets and any other associated data.

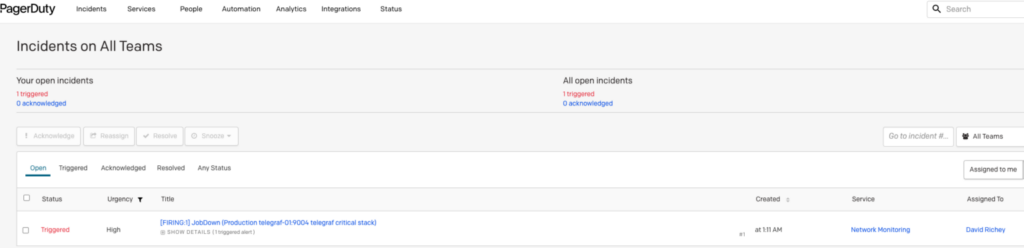

PagerDuty

PagerDuty is an industry-leading incident management system with over 650 integrations! It handles incidents, runbook automation, on-call, and bizops, all from a single SaaS platform.

In this section, I will demonstrate just how easy it is to integrate it with their EventsV2 endpoint that is fully supported by Alertmanager. We will configure our example to only send to PagerDuty for events labeled with critical. It is crucial to think of your on-call staff, SLAs, and alert exhaustion (not to mention on-call PTSD). I always try to approach severity classification with the following mantra: “Is this serious enough to wake someone up at 3am to respond?” Again, I fall back to the Google SRE Book, specifically Ch4. Service Level Objectives.

Alertmanager Config

---

global:

resolve_timeout: 30m

route:

# Let's set a default route, as required

receiver: alerta

routes:

- group_by:

- alertname

match:

source: stack

receiver: alerta

- group_by:

- alertname

match:

source: stack

severity: critical

receiver: pagerduty

receivers:

- name: pagerduty

pagerduty_configs:

- routing_key: <your_pager_duty_eventsv2_routing_key>

- name: alerta

webhook_configs:

- url: http://alerta-01:8080/api/webhooks/prometheus

send_resolved: true

Conclusion

Phew! Now that was a lot to cover in just one blog! As we could easily go down the rabbit hole on each of these topics, I will be providing a list of links for follow-up reading, especially around the anomaly detection, as it is an entire blog unto itself.

Together, we explored some advanced alerting concepts, such as the Deadman, where we learned that we could alert on missing metrics. Then came Recording Rules and their power to store pre-computed metrics that would otherwise become computationally expensive to query. These same recording rules then enabled us to move on to our next topic, standard deviation. That demonstrated how to get out of the threshold alert rule game and started exploring Standard Deviation based alerts that have the power to alert us to anomalous behavior.

Finally, our guest stars of the hour: We took a look at two of our favorite Prometheus alerting integrations (with an honorable mention of Karma) here at NTC, Alerta and PagerDuty. We saw how to leverage the power of Alerta for alert management with RBAC and hand-off and how to page our on-call staff when things are really critical.

I hope you enjoyed this blog post. Stay tuned for the next installment in our telemetry series! Rumor has it, a wild antlered animal is the main star!

-David Richey

Links for further reading:

- https://about.gitlab.com/blog/2019/07/23/anomaly-detection-using-prometheus/

- https://blog.davidvassallo.me/2021/10/01/grafana-prometheus-detecting-anomalies-in-time-series/

- https://medium.com/qubit-engineering/using-seasonality-in-prometheus-alerting-d90e68337a4c

- https://pagerduty.com

- https://alerta.io

Tags :

Contact Us to Learn More

Share details about yourself & someone from our team will reach out to you ASAP!